Foundations of Informational Behaviour

A Structural Framework for Dimensional Collapse, Reconstruction,

and Informational Superposition

Pavel Ovcharov

NORFIAD (Network of Research for Innovation and Development), UK

Abstract

This paper proposes a structural framework for the systematic analysis of informational behaviour. Information is defined as a volatile structured construct instantiated on a carrier and rendered under internal condition sets. The framework introduces the Law of Informational Constancy — that within a fully specified and identical condition set, identical informational structures are produced — and derives its consequences for traceability and investigative reasoning. The transmission chain — source, carrier, mediator, influencer, and renderer — is formalised as the structural path through which all informational access is mediated. Dimensional collapse and expansion are introduced as structural transformations governing the relationship between information and its representation on carriers. The Infinite Complexity Barrier is established as a structural consequence of dimensional loss under absent shared context. Abstraction is developed as a key-based recovery mechanism dependent on shared parameter alignment, with conversion lenses formalised as the bidirectional process converting between meaning and representation. Quantitative measures including information density, meaning uncertainty, transmission fidelity, transmission balance, and abstraction deviation are defined in terms of recoverable parameters per carrier unit. Informational superposition is formalised as bounded multiplicity arising from incomplete reconstruction constraints, with directional openness graded through the principle of limited infinity. The information cycle is described as the structural path through which information passes from source through interpretation and free processing to re-fixation. Seven structural properties of informational behaviour are identified — including distributed instantiation, logical cross-influence, and differential inference — each derived as a property of information itself. The framework establishes a coherent basis for the systematic study of informational behaviour across domains while remaining implementation-neutral and compatible with established theory.

Keywords: information structure; dimensional collapse; informational superposition; abstraction; fixation spectrum; information density; volatile information; informational constancy; reconstruction; transmission fidelity.

1. Introduction

Information is discussed across disciplines in terms of encoding, transmission, storage, cognition, computation, and physical realisation. Statistical frameworks quantify uncertainty in transmission channels. Computational models formalise symbolic manipulation. Cognitive theories investigate representation and interpretation. Physical theories address material carriers. Yet the structural behaviour of information itself — independent of specific implementation — remains insufficiently unified.

Most formal treatments measure carrier states, transmission efficiency, statistical entropy, or computational complexity. Far fewer attempt to formalise how information structurally behaves when reconstructed, how incompleteness shapes interpretation, how multiplicity emerges under partial constraint, how stabilisation occurs, how informational states resist or admit alteration, or how dependency propagates across informational structures.

Classical information theory, as formalised by Shannon (1948), provides a rigorous framework for measuring uncertainty reduction in symbol transmission across communication channels. In that formulation, semantic aspects of communication are explicitly excluded in order to isolate the engineering problem of reliable transmission. Shannon’s framework successfully describes carrier behaviour and channel uncertainty. The present framework does not revise or contradict Shannon’s theory. Rather, it addresses a complementary structural layer: the behaviour of informational content during collapse, expansion, reconstruction, and stabilisation — precisely the layer Shannon intentionally excluded from his model.

This paper proposes a structural framework for informational behaviour that is implementation-neutral. It applies equally to physical systems, symbolic systems, cognitive systems, biological systems, and computational systems. The objective is to describe how information behaves structurally once generated, transmitted, reconstructed, and stabilised.

The framework proceeds from several foundational observations: information production is deterministic under identical conditions; informational description of structured objects requires multiple independent descriptive directions for full specification; reduction of descriptive dimensionality results in loss of reconstructive determinacy; reconstruction without shared descriptive parameters results in non-terminating explanatory expansion; and under incomplete reconstruction constraints, multiple admissible interpretations may coexist prior to stabilisation.

2. Definition of Information

Information is defined as a volatile structured construct instantiated on a carrier and rendered under internal condition sets.

Information does not exist independently of interaction. It arises when source parameters — the properties of a source object or system — interact with a carrier through mediating parameters. The resulting instantiation is volatile: it may be altered under influence and requires rendering to stabilise into interpreted structure.

Volatility does not imply randomness. It implies susceptibility to alteration. The instantiated construct possesses internal organisation — relations among parameters that form a describable configuration.

Rendering occurs when a system possessing internal condition sets reconstructs descriptive structure from the carrier state. The rendered informational structure depends on both transmitted parameters and internal reconstruction conditions.

Information is not identical to the carrier that stores or transmits it. The same information may be instantiated across multiple carriers without loss of identity. This distinction between information and carrier is foundational to the framework developed in this paper.

3. Law of Informational Constancy

3.1 Statement

Within a fully specified and identical condition set, identical informational structures are produced.

Within a defined condition set, informational production is structurally deterministic. Given identical conditions, identical information is produced. This is a structural property of informational behaviour under defined conditions, independent of the specific implementation systems involved.

A condition set comprises external conditions (source parameters, carrier properties, mediating compatibility, environmental influences during transmission), internal conditions (prior parameter knowledge, contextual alignment, interpretive rules, logical frameworks), and the influence environment acting on the carrier during transmission.

Formally, let K denote a complete condition set and I the information produced:

K₁ = K₂ ⟹ I₁ = I₂

The law applies across all domains where information is generated, transmitted, or rendered: physical systems, communication systems, cognitive systems, computational systems, and investigative processes.

3.2 Consequence: Traceability

If information exists, the conditions that produced it must exist or must have existed at the moment of its creation. If one traces back to the moment of creation and cannot identify the conditions, the conditions must still be found. Their absence from observation does not mean their absence from reality. Investigation requires locating them.

3.3 Consequence: Observation Over Interpretation

If observable conditions contradict an interpretation derived from those conditions, the interpretation is wrong. Observable conditions take precedence over interpretation. When what is observed conflicts with what has been concluded, the conclusion must yield to the observation.

3.4 Critical Principle: Condition Identification

Correct application of the Law of Informational Constancy requires precise identification of conditions as they are, not as they are commonly interpreted to be.

The presence of smoke is frequently interpreted as evidence of fire. While fire may produce smoke, smoke can result from heating, evaporation, sublimation, or other chemical processes that do not involve combustion. The formal inference smoke ⟹ fire is invalid without additional condition specification. The observation of smoke remains valid regardless of cause. What fails is the assumed generating condition, not the informational state itself.

Substituting familiar interpretation for precise condition identification is the primary failure mode in investigation. The law does not guarantee correct interpretation; it guarantees consistency under correctly identified conditions. Errors arise not from informational indeterminacy but from incomplete or incorrect condition modelling.

4. Transmission Structure

Source parameters — the properties of a source object or system — are never directly accessible. All observation is necessarily mediated through transmission. What the carrier picks up is a volatile reflection of the source parameters, constrained by the interaction that created it. This makes the transmission framework necessary for understanding how information is accessed.

Informational transmission involves five structural components operating as a chain from source to renderer.

4.1 Source

The source is the object or system whose parameters give rise to informational description during interaction. It need not be intentional. A burning log, a digital processor, a neuron, or a symbolic system may serve as a source.

4.2 Carrier

A carrier is any entity that temporarily instantiates volatile informational structure generated through interaction with a source. A source becomes a carrier when two conditions are met: it shares a property with another source (property match), and it is in motion relative to the system it belongs to. Without shared property, no transfer is possible. Without motion, no delivery occurs.

4.3 Mediator

Mediators are the properties themselves that determine influence relationships — the mechanism by which influence reaches a carrier. A carrier with magnetic properties can be affected by a magnetic influencer because those properties mediate that influence. A carrier without magnetic properties cannot be affected by the same influencer. If a carrier is affected by an influencer, a mediating property must exist. This follows from the Law of Informational Constancy — the conditions that produced the effect must be present and findable.

4.4 Influencer

Influencers are alternative conditions that modify the carrier’s temporary state through mediating properties. They do not directly cancel the carried information but change it. The resulting informational state of the carrier reflects the cumulative effect of the original source interaction and all subsequent influences encountered prior to rendering.

4.5 Renderer

The renderer is the final influence in the transmission chain. The received information reflects the source parameters as altered by every carrier, mediator, and influencer along the path, with the renderer’s own influence applied last. Rendering does not access the source directly. It operates on the transformed volatile state present on the carrier.

4.6 Informational Cascade

Influence on one mediating property propagates through every connected property in the carrier. The total information transformation is the full chain of affection across all carrier properties — not only the directly affected property. Tracking only the directly affected property leads to incomplete measurement and failed reconstruction. The total information change includes every property affected by the cascade, whether the change is directly visible or not.

5. Informational Incompleteness

The total parameters of a single source are never rendered in their entirety simultaneously by a single renderer. Informational representations are structurally incomplete. No single transmission state contains all descriptive parameters of the source.

Only those parameters compatible with mediating properties are instantiated. Other parameters remain absent from the carrier. Recovery of absent parameters depends on shared context within the rendering system. Incompleteness is not failure of communication. It is a structural condition of mediated interaction and a primary driver of reconstruction variance.

6. Dimensional Collapse

Informational description of structured objects requires multiple independent descriptive directions for full specification. A dimension, in this framework, denotes an independent descriptive axis necessary to determine the object without ambiguity.

A three-dimensional object cannot be fully represented without preserving three independent descriptive directions. When information about such an object is recorded on a carrier, dimensional reduction necessarily occurs.

Consider a cube. A cube possesses three independent spatial dimensions. When represented on a two-dimensional surface — a drawing or projection — one dimension is collapsed. The result is not the cube itself but a projection: a shadow that preserves some relations while losing others. When further reduced to a one-dimensional representation — a symbolic line or linear code — additional descriptive directions are lost.

At each collapse, independent descriptive directions are removed, determinacy decreases, and multiple higher-dimensional structures may correspond to the reduced representation. A line derived from collapsing a square cannot uniquely determine the square from which it originated. A square derived from collapsing a cube cannot uniquely determine the cube. Loss of dimensionality introduces reconstructive multiplicity.

Dimensional collapse therefore explains why representation is incomplete: lower-dimensional encodings preserve constraints but eliminate independent descriptive directions.

7. The Infinite Complexity Barrier

Because dimensional collapse removes independent descriptive directions, reconstruction requires supplementation. If the renderer lacks shared descriptive parameters sufficient to restore the missing directions, reconstruction becomes underdetermined. Every attempt to specify a missing property requires additional specification. Each additional specification introduces further distinctions requiring clarification.

This process may become non-terminating. This structural condition constitutes the Infinite Complexity Barrier.

The barrier does not arise from computational limitation. It arises from dimensional insufficiency relative to reconstructive requirements. Without shared parameters capable of restoring lost descriptive directions, complete reconstruction cannot terminate.

8. Abstraction and Conversion Lenses

8.1 Abstraction as Dimensional Recovery

An abstraction is a lower-dimensional representation that relies on latent descriptive alignment within the renderer’s internal condition set. The abstraction itself does not contain the full object. It preserves a constrained projection. Reconstruction succeeds only when the renderer possesses internal parameters capable of re-expanding the collapsed descriptive directions.

Collapse reduces explicit dimensionality. Shared context restores implicit dimensionality. Reconstruction is conditional on parameter overlap. Abstraction does not eliminate dimensional loss — it compensates for it through internal alignment.

Abstraction is measured in directions and parameters lost during dimensional collapse, where a dimension is a parameter. The more parameters an abstraction recovers, the greater its quality.

8.2 Conversion Lenses

The process that converts between free information (meaning) and abstraction is called a conversion lens. Lenses are bidirectional.

The same free information can be converted to different abstractions through different lenses. The meaning "apple" is one free information object. Through an English lens it becomes "apple." Through a Chinese lens it becomes "苹果." Same meaning, different abstractions, different lenses.

The same abstraction can be converted to multiple meanings through a single lens. The abstraction "fire" converts to campfire, building fire, vehicle fire, chemical reaction, and employment termination — each a distinct meaning with its own full set of properties. These meanings share some properties (which is why they share the abstraction) but diverge on others.

A signal requires the correct conversion lens to become meaning. With the wrong lens, the same signal becomes noise. The signal does not change. The lens determines whether it is information or noise.

8.3 Filtering

Information on a carrier, having fixation, can be filtered by passing the carrier through a filter. The filter selects — information passes through or does not. Free information cannot be filtered in the same way. Applying a filter to free information produces different meaning or different resulting information. The filter does not select — it transforms. This is the action of a conversion lens.

8.4 Rendering and the Law of Informational Constancy

Let A denote an instantiated abstraction, L an internal condition set (the lens), and M the reconstructed meaning. Rendering is expressed as:

R(A, L) → M

The law in rendering form:

(A₁ = A₂) ∧ (L₁ = L₂) ⟹ M₁ = M₂

Variability arises from differences in conditions, not from arbitrariness of information.

9. Quantitative Measures

9.1 Information Density

Information density measures reconstructive efficiency — the ratio between descriptive parameters successfully reconstructed and the carrier units required to deliver the abstraction. Let Pᵣ denote the number of parameters recovered at reconstruction and n denote the number of carrier units used:

D = Pᵣ / n

In deterministic systems where rendering is governed by stable internal condition sets, density may also be expressed as the number of units describing each parameter recovered divided by the number of units used as key.

Maximum density is approached when shared context is complete and the key is minimal — the receiver reconstructs the full intended informational structure using the minimal carrier representation permitted by the system. Without shared context, density approaches zero. Information density measures reconstructive efficiency rather than transmission uncertainty. It evaluates what is reconstructed at the receiver rather than what is transmitted through the channel.

9.2 Meaning Uncertainty

When an abstraction admits multiple admissible reconstructions, the received informational state exhibits uncertainty prior to rendering. Let P denote the number of descriptive properties per admissible meaning and V the number of admissible variations within the receiver’s condition set:

U = P × V

Meaning uncertainty resides in the receiver’s reconstruction domain, not in the transmitted carrier itself. The more meanings assigned to a single abstraction, the more undetermined the free information is on reception. Uncertainty does not increase information — it reduces effective recovery.

9.3 Information Transmitted

Let R denote recovered units, n carrier units used, and V admissible variations:

Iₜ = R / (n × V)

As admissible variation increases, transmitted information per carrier unit decreases. More variants in the receiver means less information gets through per carrier unit.

9.4 Transmission Fidelity

Let Dₛ denote sender density and Dᵣ receiver density:

Fₜ = Dᵣ / Dₛ

Fidelity of 1 indicates proportional reconstruction. Below 1 indicates informational loss. Above 1 indicates contextual augmentation — the receiver reconstructed more than the sender intended, as shared context filled in parameters the sender did not deliberately transmit.

9.5 Transmission Balance

Bₜ = Dᵣ − Dₛ

Zero indicates balance. Negative values indicate loss — the magnitude indicates how many units per carrier were lost. Positive values indicate augmentation — the magnitude indicates how many units per carrier were unintentionally transmitted. Balance measures the actual differential in real units rather than proportional fidelity.

9.6 Abstraction Deviation

In asynchronous or multi-receiver systems, the same abstraction may be reconstructed differently across renderers. Let Pₘ denote matching parameters and Pₓ mismatching parameters:

Dₐ = Pₓ / Pₘ

Zero indicates complete agreement. Division by zero cannot occur: if a signal is received and read, at least one parameter matches by the act of reception itself. The deviation threshold determines usability — below threshold, the received information is usable; above threshold, the received information is noise.

Abstraction deviation measures shared context quality between receivers indirectly — without measuring shared context itself.

9.7 Fundamental Principle: Shared Space Requires Matching Parameters

Systems with zero matching parameters cannot exist in the same space. Shared space is defined by the presence of matching parameters. Without matching parameters, systems do not share a space — they cannot interact, transfer, or influence each other. This functions as a structural existence condition within the framework. It applies at every scale: a source becomes a carrier when it shares a property with another source; a carrier transfers information within a system it belongs to; systems share a parent system through shared conditions.

Multiple systems with zero matching parameters can occupy the same space without interacting. They are co-located but invisible to each other — not because of distance or separation, but because zero matching parameters means no shared space, no interaction, no detection, no transfer, no influence. Bridging between such systems requires establishing at least one matching parameter.

10. Dimensional Expansion and Informational Superposition

10.1 Dimensional Expansion

Converting information upward through dimensions produces the opposite effect of collapse. A one-dimensional piece of information converted to two dimensions acquires multiple possible meanings — a line becomes all possible two-dimensional shapes consistent with its properties. Each possible two-dimensional shape becomes equally many possible three-dimensional objects. One-dimensional information converted directly to three dimensions produces all possible three-dimensional objects consistent with the one-dimensional input. Every additional dimension multiplies the possible interpretations.

10.2 Informational Superposition

When a lower-dimensional abstraction is expanded within a higher-dimensional descriptive space under incomplete constraints, multiple admissible reconstructions may satisfy the preserved representation. This state of bounded multiplicity constitutes informational superposition.

Let A be an abstraction and L an internal condition set. The admissible reconstruction set Ω(A) consists of all reconstructions consistent with preserved constraints:

Ω(A) = { R(A, L) | L ∈ L_valid }

Superposition exists when |Ω(A)| > 1. Rendering under a specific internal condition set Lₖ resolves multiplicity locally, yielding a single Mₖ. This resolution does not eliminate alternative admissible reconstructions in other contexts. Different renderers may fix different states from the same free information.

Superposition is constrained by the starting system. The constraints of the starting information define the scope of possible variations. A one-dimensional line can expand into all possible two-dimensional shapes, but only those consistent with the line’s length and properties. The starting information limits the expansion.

Superposition is therefore structured, bounded, constraint-dependent, and locally resolvable. It is not randomness. It is multiplicity permitted by preserved directional openness.

10.3 Directional Openness and Limited Infinity

Dimensional structure alone does not determine determinacy. What matters is the number of remaining open expansion directions within the descriptive space.

Each independent axis possesses two possible directional continuities. A dimension therefore contains two expansion directions. In three-dimensional descriptive space: the x-axis contributes two directions, the y-axis two directions, and the z-axis two directions. Maximum directional openness in three dimensions equals six expansion directions.

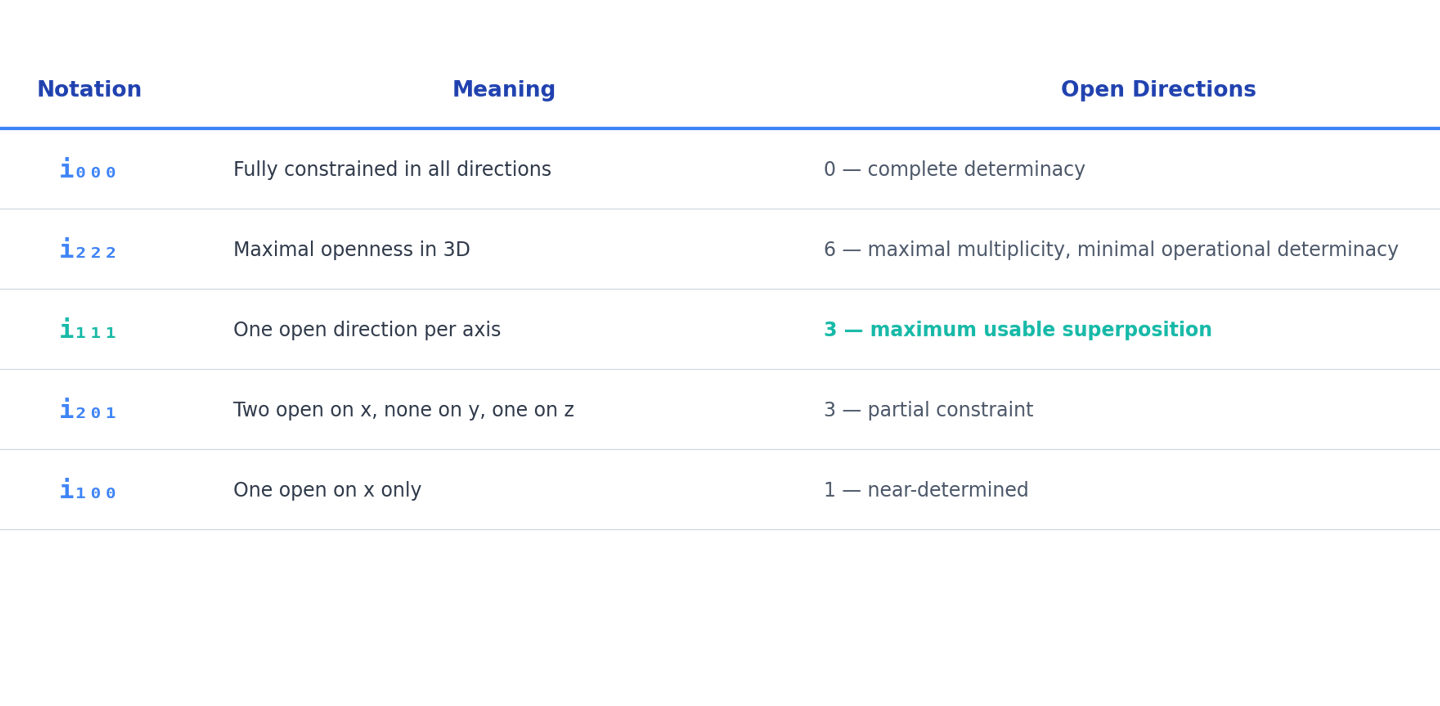

We denote directional openness using the notation iₓₙₘ, where each position indicates the number of open directions on that axis: 0 indicates no open directions (fully constrained), 1 indicates one open direction, and 2 indicates two open directions.

This notation does not measure numerical infinity. It measures structural directional openness. Directional openness defines the degree of reconstructive multiplicity permitted within the system.

10.4 Collapse and Openness Interaction

A one-dimensional line of known length is fully determined within its own dimension. However, when embedded in two-dimensional space, that line may expand relative to the additional orthogonal axis. The line is fixed in its intrinsic dimension but open in the additional direction.

Dimensional embedding therefore alters directional openness even when intrinsic constraints remain fixed. Determinacy is relative to dimensional context.

10.5 Usability and Constraint

Usability depends on partial constraint. At i₂₂₂ — maximum openness in three dimensions — reconstruction space is maximal and determinacy minimal. At i₀₀₀, determinacy is maximal and flexibility absent.

Intermediate states such as i₁₁₁ represent balanced systems in which structure is preserved while expansion remains possible. Three known parameters, three directions of expansion. The structure is fixed; the content floats.

Informational systems operate most effectively in intermediate openness states where sufficient constraint ensures usability while sufficient openness permits adaptability. Information system design is choosing what to render and what to leave in superposition. Fix too much — flexibility is lost. Fix too little — the information is not usable.

11. Free Information, Processing, and the Information Cycle

11.1 Free Information

Free information is information with no fixation — not on a carrier, zero resistance to alteration, existing only during processing.

In the absence of carrier-imposed dimensional constraints, informational states are not restricted by lower-dimensional embedding and may expand along all independent descriptive directions available within the system. Without a carrier to constrain it, information is not bound to fewer dimensions — a line requires a surface, a surface requires a body. Without such constraints, all descriptive directions are structurally available.

Information space denotes the domain defined by internal condition sets during processing, independent of physical carrier constraint. It is an operational modelling term — the space within which free information is processed, transformed, and combined.

Free information is fully flexible within information space: it can reshape, combine, divide, and transform without constraint. Without fixation, informational states have no stable physical instantiation and therefore exist only within active processing contexts.

An abstraction is in a free informational state when |Ω(A)| > 1 and no stabilizing rendering has reduced the admissible reconstruction set. Free information is characterized by multiplicity of admissible states, absence of stabilizing condition resolution, and dependence on subsequent rendering for fixation.

11.2 Interpretation and Processing

Interpretation is the process of removing fixation from information — detaching it from the carrier and making it free information. It is a non-physical influence over information. Interpretation is undetermined: different interpreters produce different results from the same information.

During processing, free information can be converted, combined, and transformed without constraint. New information can be created. Both rendered and created information can be lost. Processing, as described here, refers to operations within informational condition sets and is independent of specific physical instantiation mechanisms. It is the domain of free information.

11.3 Re-Fixing

Free information can be returned to a carrier through two methods. Sculpting alters the carrier itself — the carrier’s own properties are changed to hold the information. Writing adds properties to the carrier without changing it — the information is added as a temporary state that can be altered or removed while the carrier remains intact.

11.4 The Information Cycle

The complete path from source parameters through volatile states and back to fixation constitutes the information cycle. The cycle proceeds: physical rendering → interpretation → free processing → re-fixing.

Interpretation enters the cycle. The moment interpretation removes fixation and information becomes free, the outcome depends on the interpreter, the conversion lens, and the rendering choices made. The cycle is undetermined. The structural properties of information identified in this framework — superposition, undetermined states, observer-dependent results — emerge specifically from the cycle where interpretation is present.

12. Fixation Spectrum

Fixation denotes resistance of an informational record to alteration under influence. Fixation is not binary. It exists on a continuous spectrum.

An informational record may exhibit varying levels of fixation relative to a specific rendering system and influence environment. Fixation is defined relative to the mediating properties available for influence, the magnitude of external or internal influencers, and the duration of interaction with those influencers.

Let I denote influence magnitude required for alteration and T denote the interaction time required:

Φ ∝ I × T

Higher fixation corresponds to greater resistance — requiring stronger influence, longer exposure, or both. Lower fixation corresponds to increased susceptibility to alteration under weaker or shorter influence.

An informational record in full superposition exhibits minimal fixation. As stabilizing rendering occurs, fixation increases. Fixation represents the stabilization state of information within a given influence environment.

13. Communicative Stabilization

Structural multiplicity arises when identical abstractions are rendered under differing internal condition sets. However, multiplicity does not necessarily persist.

When multiple renderers interact, their internal condition sets may partially align through communicative exchange. Let O(Lᵢ, Lⱼ) denote the degree of parameter overlap between condition sets of renderers i and j:

|Ω(A)| decreases as O(Lᵢ, Lⱼ) increases

Stabilization may occur through continuous convergence — gradual alignment of reconstruction parameters through interaction — or threshold stabilization — discrete reduction in multiplicity once sufficient parameter overlap is achieved.

Stabilization is not a physical transformation of the informational record. It is an alignment of reconstruction conditions across renderers. It does not guarantee correspondence to the source — if stabilization occurs around an incorrect shared interpretation, reconstruction may converge to an internally stable but factually incorrect state.

14. Structural Properties of Informational Behavior

The following properties are derived from the fixation spectrum, the information cycle, and the dimensional structure of free information as established in the preceding sections. They describe how information behaves across all states and cycles. Each property has its own rules, mechanisms, and depth of investigation. The descriptions below establish each property and its core mechanism. Detailed expansion — including derived rules, formulas, edge cases, and cross-domain applications — will be provided in subsequent work.

14.1 Superposition

Free information exists as all possible states consistent with its constraints until rendered. Rendering fixes one state; different renderers may fix different states from the same free information. Superposition is graded by the principle of limited infinity through directional openness (i₀₀₀ through i₂₂₂), with maximum usable superposition at i₁₁₁ — three known parameters, three directions of expansion.

14.2 Distributed Instantiation

An informational abstraction, once generated and transmitted, is not confined to a single carrier. It may be instantiated on multiple independent carriers within a shared parameter space. Each carrier resolves superposition locally under its own influence environment and rendering conditions. A single carrier unit stabilizes one admissible variant at a time, but distinct carriers may stabilize different admissible variants of the same abstraction without contradiction.

Multiplicity therefore arises through distributed instantiation rather than duplication of origin. The abstraction remains structurally consistent while its stabilized forms may differ across independent carriers.

14.3 Local Exclusivity of Fixation

Fixation resolves superposition locally at the level of an individual carrier. A single carrier unit stabilizes one admissible variant of an abstraction at a given fixation state. Distinct carriers may simultaneously stabilize the same abstraction in identical or different admissible variants. Each stabilized instance is independent, as fixation is defined relative to the specific carrier and its influence environment.

Multiplicity of fixation therefore arises through multiplication of carriers, not through coexistence of multiple stabilized variants within a single carrier unit. Fixation is atomic per carrier and distributable across carriers.

14.4 Remote Re-Rendering

An informational abstraction may be transmitted across carriers and reconstructed at a distant renderer without altering the originating instance. Rendering produces a new stabilized instance derived from the transmitted abstraction and the receiver’s internal condition set.

Remote re-rendering enables distributed multiplication of informational instances without transfer of the original stabilized state.

14.5 Trans-Object Transmission

Information may propagate through intervening objects when the carrier instantiating the informational state is compatible with the mediating properties of that object. Transmission does not require direct structural continuity between source and renderer. If the carrier supports propagation across an intervening structure, informational state may traverse that structure without requiring its removal or modification.

The ability of information to pass through an object therefore depends not on the abstraction itself, but on the physical or structural compatibility between carrier properties and the mediating properties of the intervening object. Carrier suitability determines transmissibility.

14.6 Logical Cross-Influence

Informational structures may exhibit cross-influence when logical dependencies exist between them. If two informational structures share parameters within a coherent logical framework, alteration of one structure may necessitate alteration of the other to preserve internal consistency. The influence is not mediated through direct carrier contact, but through the logical relations maintained within the rendering system.

Cross-influence therefore arises from structural dependency rather than physical interaction. Such dependency may be bidirectional, where changes propagate mutually, or unidirectional, where one structure constrains another without reciprocal effect. Logical cross-influence operates within condition-aligned informational spaces and depends entirely on shared parameter relations.

14.7 Differential Inference

When two informational instances concerning the same subject are compared, differences between them may generate new informational structures. This generated information does not arise from direct observation of the source but from relational contrast between existing instances.

The created informational structure represents an inferred mechanism explaining the discrepancy. Its validity is not guaranteed by its generation. Confirmation requires independent source verification. Differential inference therefore produces interpretive constructs that may guide reasoning but must be distinguished from directly observed informational states.

15. Integrated Illustration: The Abstraction "Fire"

Consider the abstraction A = "Fire."

Dimensional Collapse: The event of fire is a multi-parameter phenomenon — chemical reaction type, heat magnitude, light emission, oxygen consumption, fuel state change, temporal progression, spatial extent, risk level, required response. When collapsed to the single lexical token "fire," most descriptive directions are absent from the carrier.

Informational Superposition: Different renderers under distinct condition sets produce different reconstructed states. R(A, L₁) = small campfire in a recreational context. R(A, L₂) = vehicle fire in an urban emergency context. R(A, L₃) = employment termination in an organizational context. R(A, L₄) = rapid oxidation reaction in a chemical context. |Ω(A)| > 1. Informational superposition exists prior to stabilization.

Information Density: Where full shared context exists, the single token may recover numerous parameters — object identity, scale, threat level, required action, temporal urgency, spatial scope. Density is high where context is rich and approaches zero where context is absent.

Abstraction Deviation: Between L₃ (employment) and L₁ (combustion), matching parameters are limited to the lexical token itself. Mismatching parameters are numerous. Deviation is high. The abstraction does not function as a reliable key across these condition sets without additional context.

Communicative Stabilization: If renderers exchange validation signals — "It’s the building on the left that’s on fire" — internal condition sets converge, superposition reduces, and multiplicity resolves toward a shared reconstruction. Stabilization does not guarantee correctness: if the validation signal is itself incorrect, reconstruction converges to an internally stable but factually incorrect state.

Condition Identification: The observation "there is smoke" is valid. The inference "there is fire" is an interpretation of conditions. Additional conditions must be identified before the Law of Informational Constancy can be applied reliably. The observation takes precedence. Investigation requires locating the actual conditions.

Differential Inference: A witness previously told the building was structurally sound receives the new information "fire detected inside." The difference generates the interpretation "the building must be compromised." No direct observation of structural compromise exists. Confirmation requires direct inspection. The created information may be entirely wrong.

The abstraction "fire" demonstrates, within a single scenario: dimensional collapse, informational superposition, density variation, abstraction deviation, communicative stabilization, condition identification, fixation spectrum effects, and differential inference — each operating consistently with the framework’s principles.

16. Conclusion

This paper has established a structural framework for informational behavior at the level of generation, transmission, representation, reconstruction, stabilization, and measurable consequence.

The Law of Informational Constancy provides the foundational determinism principle from which traceability and the precedence of observation over interpretation are derived. The transmission chain describes the structural path through which all informational access is mediated. The dimensional model — collapse, the Infinite Complexity Barrier, abstraction as key-based recovery, and expansion — explains how information loses content through encoding, gains ambiguity through decoding, and how shared context enables communication despite dimensional loss.

Quantitative measures — information density, meaning uncertainty, information transmitted, transmission fidelity, transmission balance, and abstraction deviation — provide falsifiable metrics applicable to any domain where information is encoded, transmitted, and reconstructed. Every formula operates on countable, measurable quantities.

The seven structural properties describe how information acts across all states and cycles. These properties are derived from the fixation spectrum, the information cycle, and the dimensional structure of free information. Each property has rules, mechanisms, and cross-domain applications that warrant further formal development.

The framework is internally complete within the defined operational scope of this paper. It defines lawful reconstruction behavior, measurable stability, and structured multiplicity without reliance on metaphysical assumptions or domain-specific ontology. Its formal structure suggests broader avenues of exploration in the mathematical characterization of internal condition sets, reconstruction dynamics, and system-level informational behavior.

Within a fully specified and identical condition set, identical informational structures are produced. Variation arises from structured differences in those conditions. Within this principle lies a coherent foundation for the systematic study of informational behavior.

Acknowledgements

All intellectual property deposits referenced within this framework are deposited under the name Pavel Ovcharov, NORFIAD, February 2026.

References

1. Shannon, C. E. (1948). A mathematical theory of communication. Bell System Technical Journal, 27, 379–423, 623–656.

2. Floridi, L. (2010). Information: A very short introduction. Oxford University Press.

3. Floridi, L. (2011). The philosophy of information. Oxford University Press.

4. Bateson, G. (1972). Steps to an ecology of mind. University of Chicago Press.

5. Dretske, F. (1981). Knowledge and the flow of information. MIT Press.

6. Kolmogorov, A. N. (1965). Three approaches to the quantitative definition of information. Problems of Information Transmission, 1(1), 1–7.

7. Chaitin, G. J. (1966). On the length of programs for computing finite binary sequences. Journal of the ACM, 13(4), 547–569.

8. Wiener, N. (1948). Cybernetics: Or control and communication in the animal and the machine. MIT Press.

9. Zurek, W. H. (1989). Algorithmic randomness and physical entropy. Physical Review A, 40(8), 4731–4751.

10. Bar-Hillel, Y. & Carnap, R. (1953). Semantic information. British Journal for the Philosophy of Science, 4(14), 147–157.

11. Landauer, R. (1961). Irreversibility and heat generation in the computing process. IBM Journal of Research and Development, 5(3), 183–191.

12. Brillouin, L. (1956). Science and information theory. Academic Press.

13. Stonier, T. (1990). Information and the internal structure of the universe. Springer.

14. Frieden, B. R. (1998). Physics from Fisher information. Cambridge University Press.

15. von Foerster, H. (1960). On self-organising systems and their environments. In M. C. Yovits & S. Cameron (Eds.), Self-organising systems (pp. 31–50). Pergamon Press.

16. MacKay, D. J. C. (2003). Information theory, inference, and learning algorithms. Cambridge University Press.

17. Boulding, K. E. (1956). The image: Knowledge in life and society. University of Michigan Press.

18. Dennett, D. C. (1991). Consciousness explained. Little, Brown and Company.

19. Penrose, R. (1989). The emperor’s new mind. Oxford University Press.

20. Luhmann, N. (1990). Essays on self-reference. Columbia University Press.